Demo

Different model's behavior on Copying memory task. The vector represents the hidden state of each model through time. They show different behaviors on reading, waiting and writing period.

Abstract

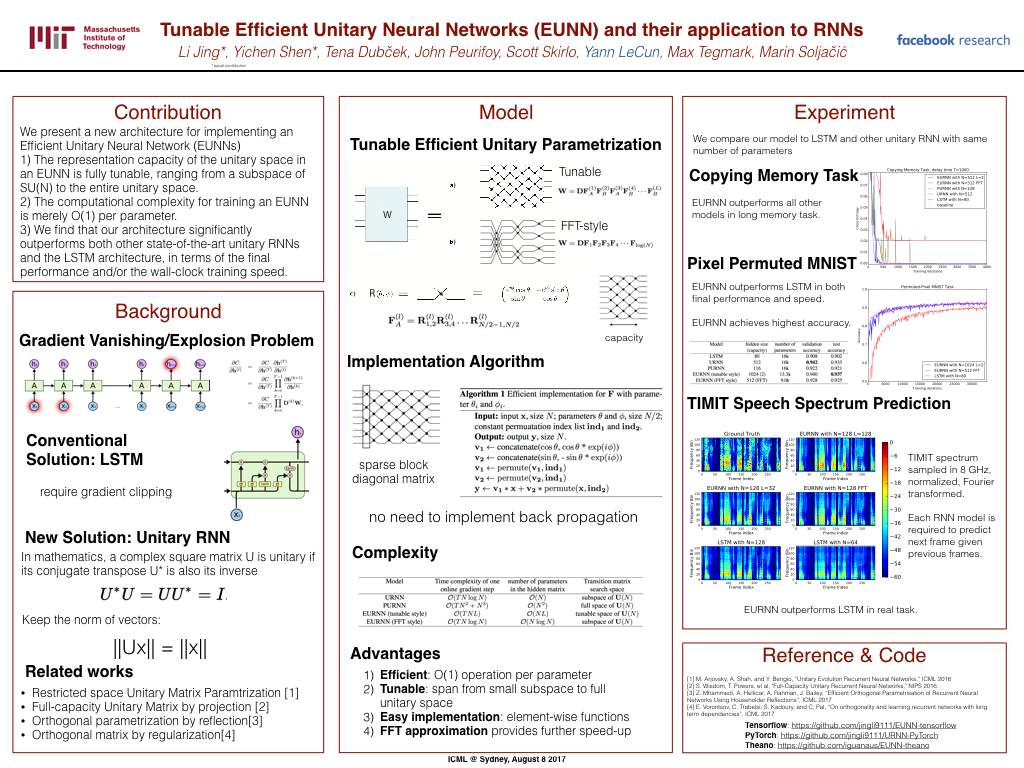

Using unitary (instead of general) matrices in artificial neural networks (ANNs) is a promising way to solve the gradient explosion/vanishing problem, as well as to enable ANNs to learn long-term correlations in the data. This approach appears particularly promising for Recurrent Neural Networks (RNNs). In this work, we present a new architecture for implementing an Efficient Unitary Neural Network (EUNNs); its main advantages can be summarized as follows. Firstly, the representation capacity of the unitary space in an EUNN is fully tunable, ranging from a subspace of SU(N) to the entire unitary space. Secondly, the computational complexity for training an EUNN is merely O(1) per parameter. Finally, we test the performance of EUNNs on the standard copying task, the pixel-permuted MNIST digit recognition benchmark as well as the Speech Prediction Test (TIMIT). We find that our architecture significantly outperforms both other state-of-the-art unitary RNNs and the LSTM architecture, in terms of the final performance and/or the wall-clock training speed. EUNNs are thus promising alternatives to RNNs and LSTMs for a wide variety of applications.

Citation

Bibilographic information

Li Jing*, Yichen Shen*, Tena Dubcek, John Peurifoy, Scott Skirlo, Yann LeCun, Max Tegmark, Marin Soljacic. "Tunable Efficient Unitary Neural Networks (EUNN) and their application to RNNs." International Conference on Machine Learning (ICML), 2017.

@inproceedings{jing2017a,title={Tunable Efficient Unitary Neural Networks (EUNN) and their application to RNNs},

author={Jing, Li and Shen, Yichen and Dubcek, Tena and Peurifoy, John and Skirlo, Scott and LeCun, Yann and Tegmark, Max and Soljacic, Marin},

booktitle={International Conference on Machine Learning},

year={2017}

}